Dagger.io, a Universal CI

Dagger.io is a project that was announced some time ago by Solomon Hykes, and its philosophy caught my attention.

It is a CI/CD service that allows running jobs in Docker containers. The added value of Dagger is that it is not limited to Yaml (like Gitlab-CI, Github Action, Drone.io) or a custom DSL (like Jenkins). It allows running jobs using Python, Go, Java, TypeScript, or even GraphQL code.

It is somewhat similar to Pulumi but for CI/CD jobs. (Where its competitor Terraform uses a DSL, Pulumi uses TypeScript, Python, Java, etc.)

Since I use GitHub for my public projects, Gitea for my private projects (coupled with Drone), and GitLab for professional projects, I thought it was a good opportunity to test Dagger.io and get rid of my Yaml files with different syntaxes depending on the platform.

My idea behind converting my CI/CD jobs into code is also to have the same results between different platforms and my local machine.

So, let’s take a look at what Dagger.io is, how to install it, and how to use it. Since I’m familiar with the Python language, I will use the Dagger.io Python SDK!

Dagger.io Installation

You will need Python 3.10 or higher to use Dagger.io (you can also use a venv).

To install Dagger.io, there is nothing complicated, just install the package via pip.

pip install dagger-io

And that’s it for the installation.

(Click here if you encounter this error: ERROR: Could not find a version that satisfies the requirement dagger-io (from versions: none))

If you encounter an error like this:

➜ ~ python3 -m pip install dagger-io

Defaulting to user installation because normal site-packages is not writeable

Collecting dagger-io

Using cached dagger_io-0.4.2-py3-none-any.whl (52 kB)

Collecting cattrs>=22.2.0

[...]

Using cached mdurl-0.1.2-py3-none-any.whl (10.0 kB)

Collecting multidict>=4.0

Using cached multidict-6.0.4-cp310-cp310-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (114 kB)

ERROR: Exception:

Traceback (most recent call last):

File "/usr/lib/python3/dist-packages/pip/_internal/cli/base_command.py", line 165, in exc_logging_wrapper

status = run_func(*args)

File "/usr/lib/python3/dist-packages/pip/_internal/cli/req_command.py", line 205, in wrapper

return func(self, options, args)

File "/usr/lib/python3/dist-packages/pip/_internal/commands/install.py", line 389, in run

to_install = resolver.get_installation_order(requirement_set)

File "/usr/lib/python3/dist-packages/pip/_internal/resolution/resolvelib/resolver.py", line 188, in get_installation_order

weights = get_topological_weights(

File "/usr/lib/python3/dist-packages/pip/_internal/resolution/resolvelib/resolver.py", line 276, in get_topological_weights

assert len(weights) == expected_node_count

AssertionError

You may have an outdated version of pip and setuptools. The solution is to update pip and setuptools using the following command:

pip install --upgrade pip setuptools

If you do not want to work with the root user, you will need to configure Docker’s Rootless mode. (This is what I did) To do this, simply follow the official documentation.

First job

To start, we will create a file hello-world.py and add the following code:

"""Execute a command."""

import sys

import anyio

import dagger

async def test():

async with dagger.Connection(dagger.Config(log_output=sys.stderr)) as client:

python = (

client.container()

.from_("python:3.11-slim-buster")

.with_exec(["python", "-V"])

)

version = await python.stdout()

print(f"Hello from Dagger and {version}")

if __name__ == "__main__":

anyio.run(test)

This is a simple job that will launch a Docker container with the python:3.11-slim-buster image and execute the python -V command.

To run the job, simply run with python: python3 hello-world.py.

➜ python3 hello-world.py

#1 resolve image config for docker.io/library/python:3.11-slim-buster

#1 DONE 1.7s

#2 importing cache manifest from dagger:10686922502337221602

#2 DONE 0.0s

#3 DONE 0.0s

#4 from python:3.11-slim-buster

#4 resolve docker.io/library/python:3.11-slim-buster

#4 resolve docker.io/library/python:3.11-slim-buster 0.2s done

#4 sha256:f0712d0bdb159c54d5bdce952fbb72c5a5d2a4399654d7f55b004d9fc01e189e 0B / 3.37MB 0.2s

#4 sha256:f0712d0bdb159c54d5bdce952fbb72c5a5d2a4399654d7f55b004d9fc01e189e 3.37MB / 3.37MB 0.3s done

#4 extracting sha256:80384e04044fa9b6493f2c9012fd1aa7035ab741147248930b5a2b72136198b1

#4 extracting sha256:80384e04044fa9b6493f2c9012fd1aa7035ab741147248930b5a2b72136198b1 0.3s done

#4 extracting sha256:f0712d0bdb159c54d5bdce952fbb72c5a5d2a4399654d7f55b004d9fc01e189e

#4 extracting sha256:f0712d0bdb159c54d5bdce952fbb72c5a5d2a4399654d7f55b004d9fc01e189e 0.2s done

#4 ...

#3

#3 0.224 Python 3.11.2

#3 DONE 0.3s

#4 from python:3.11-slim-buster

Hello from Dagger and Python 3.11.2

Congratulations, you have launched your first job with Dagger.io!

Now, let’s see how to create a slightly more complex script!

Dagger, Python, and Docker

So far, we haven’t taken full advantage of the power of Python, or even the features of Docker. Let’s see how to use both together.

You are probably aware that I use Docusaurus to generate the HTML code you are currently viewing. Docusaurus allows me to write my articles in Markdown and transform them into a website.

Not paying much attention to the quality of my Markdown, I have decided to create a job that will check the syntax of my Markdown files and return an error if there is a problem with any of them.

To do this, I will use pymarkdownlnt, a strict and efficient linter.

You can install it using pip:

pip install pymarkdownlnt

Thus, our job will need to perform these steps sequentially:

- Start from a Python image (

FROM python:3.10-slim-buster) - Install pymarkdownlnt (

RUN pip install pymarkdownlnt) - Retrieve project files (

COPY . .) - Run the linter on Markdown files in each directory blog/ docs/ i18n/ (

RUN pymarkdownlnt scan blog/-r)

We can translate the first 3 steps into Python code:

lint = (

client.container().from_("python:3.10-slim-buster")

.with_exec("pip install pymarkdownlnt".split(" "))

.with_mounted_directory("/data", src)

.with_workdir("/data")

)

And then… I want to iterate over the blog/ docs/ i18n/ directories and run the linter on each of them. This is the moment when we will use Python code instead of just Dagger instructions.

One detail I haven’t mentioned yet is that we can modify our job as long as it hasn’t been launched, i.e., before the await statement that waits for the job to finish execution.

So… let’s keep the container definition above and add 3 tasks to our job:

for i in ["blog", "docs", "i18n"]:

lint = lint.with_exec(["pymarkdownlnt", "scan", i, "-r"])

Pretty simple, right?

If I run my job, I get many errors about rules that I haven’t followed. But it’s normal, the Docusaurus syntax causes errors in the linter that I can’t fix.

So I will note the rules that don’t apply to my files and ignore them:

lint_rules_to_ignore = ["MD013","MD003","MD041","MD022","MD023","MD033","MD019"]

# Format accepté par pymarkdownlint : "MD013,MD003,MD041,MD022,MD023,MD033,MD019"

for i in ["blog", "docs", "i18n"]:

lint = lint.with_exec(["pymarkdownlnt", "-d", str(','.join(lint_rules_to_ignore)), "scan", i, "-r"])

Here is our complete script:

"""Markdown linting script."""

import sys

import anyio

import dagger

import threading

async def markdown_lint():

lint_rules_to_ignore = ["MD013","MD003","MD041","MD022","MD023","MD033","MD019"]

async with dagger.Connection(dagger.Config(log_output=sys.stderr)) as client:

src = client.host().directory("./")

lint = (

client.container().from_("python:3.10-slim-buster")

.with_exec("pip install pymarkdownlnt".split(" "))

.with_mounted_directory("/data", src)

.with_workdir("/data")

)

for i in ["blog", "docs", "i18n"]:

lint = lint.with_exec(["pymarkdownlnt", "-d", str(','.join(lint_rules_to_ignore)), "scan", i, "-r"])

# execute

await lint.stdout()

print(f"Markdown lint is FINISHED!")

if __name__ == "__main__":

try:

anyio.run(markdown_lint)

except:

print("Error in Linting")

After this modification, my job works without any issues!

python3 .ci/markdown_lint.py

Let’s recap what we can do:

- Launch a Docker image

- Execute commands in a container

- Copy files from the host to the container

I think that will be sufficient for most of my CI needs. However, there is still one feature missing: the ability to build a Docker image and push it to a registry.

Build & Push a Docker Image

It is possible to authenticate to a registry directly through Dagger. In my case, I assume that the host on which I run my job is already authenticated.

For the purpose of this demonstration, I will use the ttl.sh registry, a public and anonymous registry that allows storing Docker images for a maximum duration of 24 hours.

async def docker_image_build():

async with dagger.Connection(dagger.Config(log_output=sys.stderr)) as client:

src = client.host().directory("./")

build = (

client.container()

.build(

context = src,

dockerfile = "Dockerfile",

build_args=[

dagger.BuildArg("APP", os.environ.get("APP", "TheBidouilleurxyz"))

]

)

)

image = await blog.build(address="ttl.sh/thebidouilleur:1h")

The above code will build my Docker image from the Dockerfile in the current directory and push it to the ttl.sh/thebidouilleur:1h registry.

One small detail in this code is the use of Build Args. I’m using the APP environment variable, and if it’s not defined, I will retrieve the default value TheBidouilleurxyz.

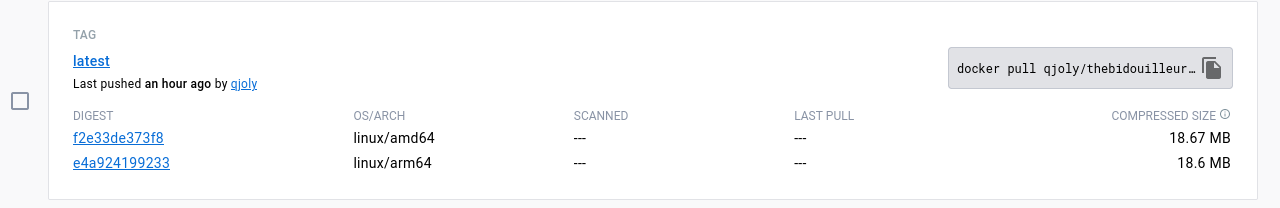

Now, I want to create a similar job that will build a multi-architecture Docker image ARM and AMD64 (one of my Kubernetes clusters is composed of Raspberry Pi).

Build & push a multi-architecture Docker image

You will need to first set up the multi-architecture build on your machine before you can integrate it into our Dagger job.

We will use an object to pass as a parameter to Dagger, this is dagger.Platform and allows us to specify the platform on which we want to build our Docker image.

We create a loop that will iterate over the different architectures with which we want to build our image, and during the Publish, we will send the different built images.

async def docker_image_build():

platforms = ["linux/amd64", "linux/arm64"]

async with dagger.Connection(dagger.Config(log_output=sys.stderr)) as client:

src = client.host().directory(".")

variants = []

for platform in platforms:

print(f"Building for {platform}")

platform = dagger.Platform(platform)

build = (

client.container(platform=platform)

.build(

context = src,

dockerfile = "Dockerfile"

)

)

variants.append(build)

await client.container().publish("ttl.sh/dagger_test:1h", platform_variants=variants)

Create a Launcher

Now that we have seen how to use Dagger, let’s create a launcher that will allow us to run our jobs one by one.

To run our tasks asynchronously, we use the anyio library in each of our scripts.

import anyio

import markdown_lint

import docusaurus_build

import multi_arch_build as docker_build

if __name__ == "__main__":

print("Running tests in parallel using anyio")

anyio.run(markdown_lint.markdown_lint)

anyio.run(docusaurus_build.docusaurus_build)

anyio.run(docker_build.docker_build)

This launcher will import the methods from the markdown_lint, docusaurus_build, and docker_build functions from the markdown_lint.py, docusaurus_build.py, and multi_arch_build.py files before executing each of these functions.

The sole purpose of this launcher is to be able to run our jobs with a single command.

Conclusion

Dagger is a very promising tool! It may not replace current solutions like Github Actions or Gitlab CI, but it addresses a specific need: having the same CI regardless of the platform.

In short, Dagger is a tool worth testing, and I think I will use it for most of my personal projects.

I hope you enjoyed this article, and please feel free to provide your feedback.